Image generated by Nano Banana.

It’s 2030. Your kid’s favorite show wasn’t made by humans.

It wasn’t made by “a studio.” Not “a team.” Not even a broke animator riddled with student loan debt eating cold ramen at 2AM while nursing carpal tunnel and an ulcer.

Instead, it was made by a handful of people with a laptop and an AI pipeline that spits out seven-minute episodes faster than you can microwave your kids dinosaur shaped nuggets. The characters are consistent. The voices are human sounding and charming. The moral lessons are surprisingly gentle enough to pass your parental filter as to what your kids should be allowed to watch. And somewhere between episode 4 and episode 40, you stop and reflect on what’s actually happened.

But is that 2030, or is it much sooner?

With the explosion of generative AI: image models, video models, synthetic voices, the whole kit—the hardest part of making moving images isn’t production anymore. Which means the goalposts have moved. If anyone can generate something that looks like a show, then the real bottleneck isn’t “Can you make it?” It’s “Can you make something worth watching?”

“But AI will never be filmmaking!” a young filmmaker screams…

That’s the future people keep sidestepping when they argue about “AI and filmmaking.” And before we get into the doom, we need to retire that word. Filmmaking is basically a museum term now—celluloid stopped being the default a long time ago, and even projection is quietly disappearing. What we’re actually talking about here is visual storytelling. A way to trap an idea inside light and sound and throw it across a room into someone else’s mind and share it with the world.

Define it that way and the conclusion gets uncomfortable fast…

Generative AI is going to change storytelling the way fire changed food: permanently, and for everyone.

But the canary in the coal mine is not live-action prestige TV. It’s not the Oscar-bait drama… which while some might be artistically fulfilling, financially they are most certainly not.

It’s animation. And the canary just won a million dollars in Dubai.

Recently, a Tunisian filmmaker won $1 million at Dubai’s 1 Billion Followers Summit for a nine-minute short film called “Lily” and created entirely using Google’s generative AI tools.

And the film looks… good. Not “Pixar had a baby with Studio Ghibli” good. But good in the way that matters commercially. It has a coherent style. It has a tone. It has character consistency. It even has that thing some Hollywood movies and shows have been lacking these day… story. It has that stop-motion-ish tactility that makes your brain forgive the occasional weirdness because the vibe is intentional.

If you’re untrained, you’ll call it impressive. If you’re trained, you’ll call it alarming.

Because the thing people keep missing is that animation is where the “uncanny valley” problem gets smaller. When you’re trying to make photoreal humans, the valley is deep and full of corpses. When you’re making stylized characters, especially for kids, the audience is far more forgiving. Kids do not sit there grading subsurface scattering and eye moisture like a Reddit VFX jury. They care about having fun with the pacing, the emotion, and whether the dog dad is actually funny.

Which brings us to the industry’s favorite question: “When will AI have its Toy Story moment?“

But that’s the wrong question.

The question is: When will AI have its Bluey moment?

Forget Toy Story. The real prize is Bluey.

A Pixar film is an event. It’s two hours, theater tickets, maybe a babysitter, and with inflation being what it is, nobody got time for that.

Bluey is 7-9 minutes long on average.

Seven minutes is a weapon. Seven minutes is “we can squeeze one in before bed.” Seven minutes is “fine, one more, then we’re done,” which is the lie parents tell themselves right before they themselves get caught in their instagram algorithm wanting to check out for a bit because they’re a millennial and extremely burned out with work and the world.

And Bluey, a $2 billion dollar piece of IP, doesn’t just win because it’s short. It wins because it’s good. It’s emotionally literate. It writes to the parent on the couch just as much as the kid on the carpet. And to boot, it can handle adult-sized themes without turning into a PSA. One particular episode comes to mind called “Onesies,” is widely discussed for touching infertility and the ache people carry around it. I know that episode touched one household…mine. And I thank the writers for doing so.

But remember, kids are also the easiest market to satisfy visually and the most valuable market to own behaviorally.

Kids will watch the same episode 40 times. They build rituals around it. Parents buy merch because it’s cheaper than therapy. And the short runtime makes it frictionless.

7 minutes. That’s the target. That’s the crown jewel. The show that becomes part of the household’s rhythm.

Doug Shapiro’s point, and why it should scare you.

For those of you who don’t know him, Doug Shapiro is a veteran media strategist, Wall Street analyst turned Time Warner/Warner Media (ex-Turner CSO) executive who now publishes The Mediator, advising on how AI is reshaping entertainment economics. Check it out if you haven’t.

I listened to Doug Shapiro speak recently on a virtual Future Film event:

He said two things that really stuck with me.

1. “Gen.AI does not need to produce comparable quality. It only needs to compete for time.”

2. “Hollywood’s main value-add has been its checkbook, and it’s not gonna be as important going forward.”

I couldn’t agree more.

He makes the argument plainly: animation is the canary. In his breakdown of Ben Affleck’s “AI will just make production 30% cheaper” take, he points out that AI doesn’t have to replace actors to gut budgets because above-the-line costs are often a minority of spend. And then he drops the part that should make every studio exec reach for the antacids.

Shapiro cites Where the Robots Grow, an AI-enabled animated feature: 87 minutes, “a team of nine people,” “three months,” “$8,000 per minute,” and “99% less cost than the average Pixar film.”

That’s not “a little efficiency.” That’s a new species. And video editors like myself, who have been using AI throughout this period of innovation, will most likely be its head.

And notice the quiet killer in Shapiro’s framing: it doesn’t have to be Pixar quality today. It just has to be good enough for a certain audience, at a certain price point, distributed at internet scale.

Which is why animation is first. Animation, especially stylized animation, is mostly a pipeline problem. And pipelines are exactly what AI is good at turning into a blunt force instrument. (I’m looking at you, ComfyUI.)

We’ve seen this movie before: “jerky,” “soulless,” and then unstoppable.

People love to pretend today’s “AI slop” discourse is unique. It isn’t. Every new tool gets insulted before it gets normalized.

Ed Catmull wrote about early computer animation and a technical issue that made audiences react badly. When moving objects were too perfectly in focus, theatergoers experienced an “unpleasant, strobe-like sensation,” which they described as “jerky.”

That’s the pattern: first it looks wrong, then someone fixes a handful of problems, then the audience stops caring about the tool and starts caring about the story. Then the tool becomes the new normal and the old guard starts pretending they loved it all along.

AI animation is barreling toward that normalization. It’s not there yet. But the progress curve is not subtle.

The trap: habituation and the AI “smell”.

Now, it’s not all sunshine and rainbows here. Despite the fact that I recognize what Gen AI is capable of, I am never going to turn a blind eye to the unpleasant things I also see.

Here’s the part the hype people skip because it’s inconvenient: habituation.

The APA dictionary defines habituation as “the diminished effectiveness of a stimulus in eliciting a response” after repeated exposure.

In plain English: the brain gets bored.

A lot of AI-generated visuals already carry an “AI smell.” Same lighting. Same smoothness. Same safe compositions. Same eerie competence. It’s not that it’s bad. It’s that it’s same-y, and your nervous system learns it fast.

So no, you can’t just rely on the models and expect enduring IP. If you want characters people love for years, you need intentionality and authorship.

Which is where the practical solution looks almost boring: start with humans.

If you want to protect IP and avoid the gray-zone hell of “who owns what,” don’t prompt your way into your main character. Commission an artist. Develop real concept art. Build a style bible. Make the characters yours in a way you can defend. Then use AI to accelerate the labor that used to make animation slow and expensive: backgrounds, motion variations, shot generation, lip sync, cleanup, versioning, localization.

Let AI be a machine that speeds up your voice, not a machine that replaces having one.

So what happens when the barrier breaks?

Have you ever heard of the Bannister effect? No? Good.

May 6, 1954, at the Iffley Road Track in Oxford, England, finishing in 3:59.4, Roger Bannister became the first person ever to run a sub-four-minute mile. It basically blew everyone’s collective minds at the time. After that, more people did it, because the “impossible” story collapsed. People call it the Bannister effect: once a barrier is broken, belief shifts and imitation follows.

The same thing happens in the media. One breakout rewrites what’s considered feasible. Once a small team ships an AI-assisted show that becomes a real hit, the flood will come fast and merciless.

And the flood is already coming from the creator side. Shapiro’s broader point is that Hollywood isn’t competing in a closed system. Lower barriers don’t just mean “more shows.” They mean a widening field of competitors where a tiny fraction of an internet-scale output can destabilize the economics.

Meanwhile, the attention economy is getting uglier. Taylor Lorenz’s Washington Post piece describes the “Beastification of YouTube” tied to “retention editing,” where hyper-engaging, fast-paced videos train creators into the same addictive grammar. While that style of editing might be slowly going out of style, it also grew the revenue for a lot of creators to the point where their budgets reflect what I see in Hollywood.

If you’ve worked in Hollywood for any length of time, you’ve already felt the insult. There are creator budgets now that compete with, and sometimes exceed, what streamers will greenlight for episodes. It’s clear that the prestige machine is being squeezed from below and from the side.

But AI is a python that is wrapping itself around both the Creator Economy and Legacy Media at the same time. And it won’t stop tightening until something breaks: the business model, the labor model, or both.

So what will the Future look like?

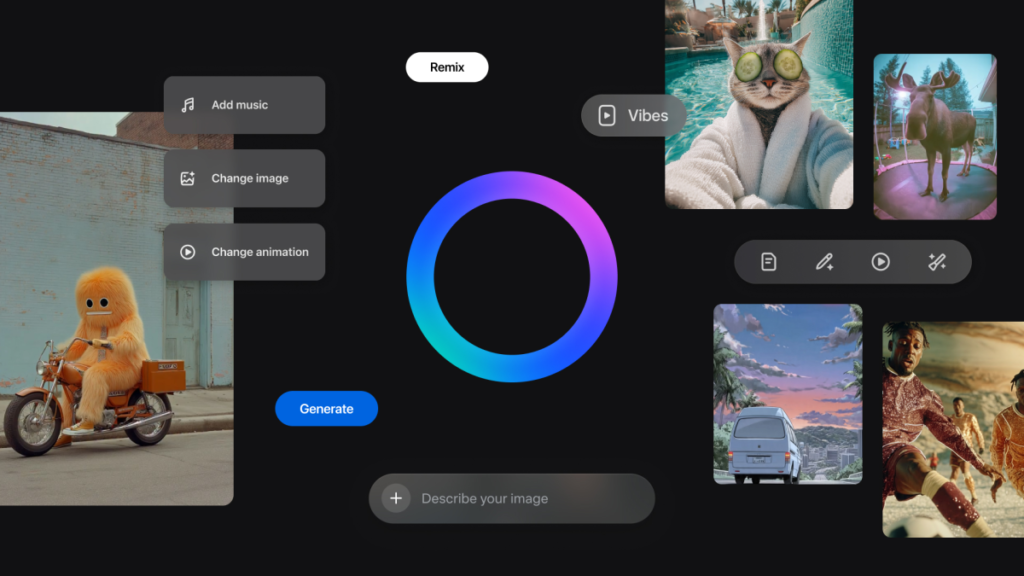

That’s I think what’s on everyone’s mind at this point from venture capital to the creatives they are funding companies to disrupt. In my view, when every platform becomes an all-you-can-eat buffet of synthetic cartoons, the value shifts from making more to choosing well.

That is why the next power platform might not be the one that generates the most. It might be the one that curates the best. A service that says: we’ve filtered the flood. No sludge. No weird knockoff voices. No borderline-infringing character designs. Just stuff you’d actually let play in your living room without feeling like you’re mainlining algorithmic junk into your kid’s brain.

The brand promise becomes taste and trust, not volume.

And here’s the twist that should make Hollywood stop preening and start thinking: the streamers could end up surviving by becoming that curated layer. The creator-to-streamer pipeline is already forming. MrBeast built a massive Amazon Prime Video franchise with Beast Games. Netflix is signing up major YouTube talent like Mark Rober for new shows.

That looks, to me, like the early stages of a licensing and packaging business where streamers become the safe, polished storefront for internet-native studios that out-iterate Hollywood. So yeah, it’s possible the endgame isn’t a brand-new platform. It’s a reshuffling of power where open platforms become the chaotic R&D lab, AI shrinks production into small teams, and the “premium” services turn into the distribution moat again, not because they can outspend everyone, but because they can still curate.

In 2030, your kid’s favorite show might not be made by humans. But the big question will be: who made the decision to put it in front of your kid, and why should you trust them?