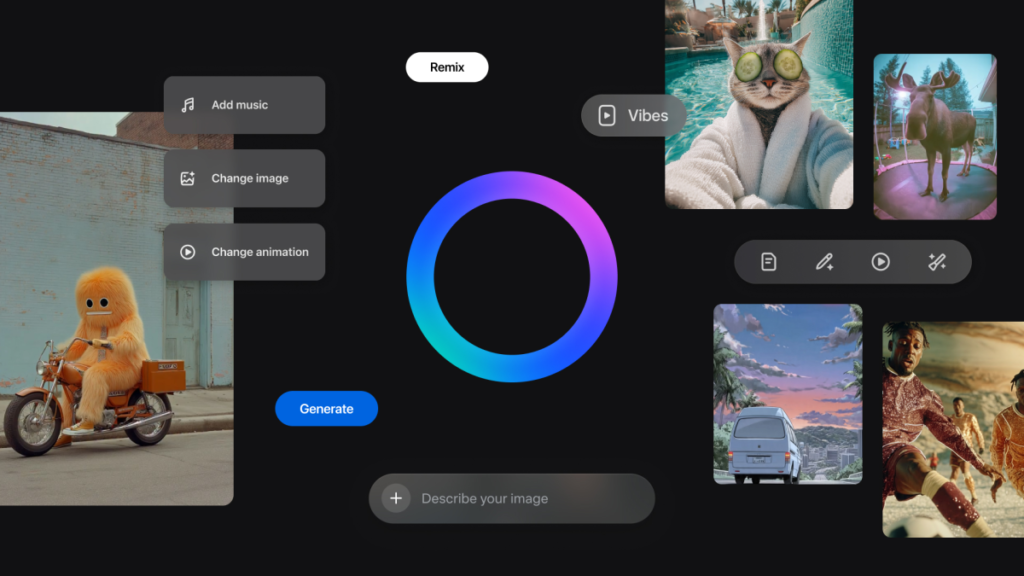

Luma AI has rolled out Ray3.14, a significant upgrade to its reasoning-based video model Ray3, aimed squarely at professional animation and cinematic production workflows.

According to the company, Ray3.14 is optimized for use cases where temporal stability, motion accuracy, and visual consistency are non-negotiable. Luma says the update improves performance across animation, video-to-video generation, and high-end cinematic work, areas where small artifacts like flicker or drift can quickly undermine production quality.

Designed for commercial creative environments, Ray3.14 introduces native 1080p video output, generation speeds that are four times faster than previous versions, and a new pricing structure that is three times cheaper on a per-second basis. Luma positions the release as an attempt to remove the long-standing trade-off between speed, cost, and quality in generative video.

“The model delivers the highest quality and stability Luma has ever achieved, excelling in animation-heavy and high-fidelity workflows where other models struggle with frequent flicker, drift, and inconsistency,” the company said.

Ray3 was originally built around a reasoning-first approach to video generation, with the model designed to understand scenes holistically rather than frame by frame. Luma says that approach allows Ray3 to maintain coherence across lighting, motion, characters, and camera behavior. With Ray3.14, that reasoning engine has been further tuned for animation and professional video production, resulting in tighter adherence to detail and more reliable outputs.

“Ray3.14 is designed for creators who need animation and video to behave like real production assets,” said Amit Jain, CEO and co-founder of Luma AI. “By delivering native 1080p, dramatically faster generation, and per-second pricing that is 3× cheaper, we’re giving advertisers and filmmakers a model that excels in animation and can be trusted for real-world creative workflows.”

Luma says the update delivers its strongest results yet for animation and video-to-video generation, with improved frame-to-frame consistency that keeps characters, environments, and visual styles stable over time. That stability, the company argues, makes the model suitable for narrative work, polished animation, and outputs that can move directly into production pipelines.

Native 1080p generation is now standard across Ray3.14’s core workflows, removing the need for post-generation upscaling and allowing footage to flow directly into editorial, finishing, and distribution for broadcast, streaming, and digital platforms.

Speed is another focus of the release. With generation running four times faster, Luma says Ray3.14 is better aligned with real production schedules, enabling creative teams to test more ideas, compare variations side by side, and reduce revision cycles. The company claims its time-to-first-frame and end-to-end generation outperform competing AI video models.

Ray3.14 also introduces a revised pricing model intended to support production-scale use. Luma reports that the cost of generating a typical five-second clip has dropped by a factor of three, making AI video more practical for campaigns that require multiple formats, cut-downs, or regional variations. The per-second pricing structure is designed to offer predictable costs while allowing teams to increase output without expanding budgets.