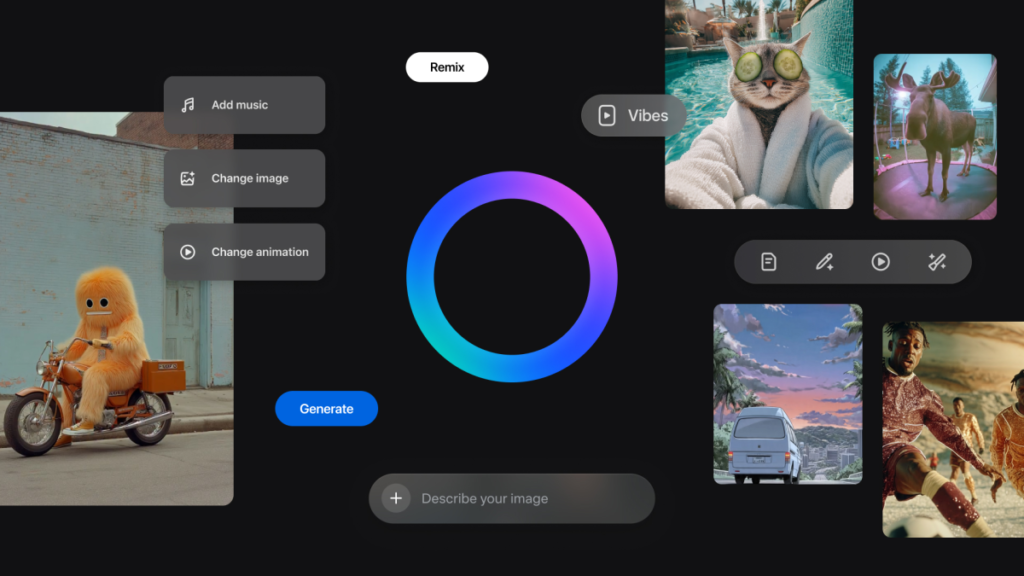

Google’s DeepMind has unveiled Genie 3, the latest evolution of its “world model” technology that can produce richly interactive virtual environments straight from simple text prompts. According to the company, the model generates explorable 3D worlds in real time at 24 frames per second and 720p resolution, maintaining spatial and logical coherence for several minutes rather than just fleeting clips.

Unlike prior generative systems that output static images or short video sequences, Genie 3 builds environments that users—or AI agents—can roam through and interact with, making it a significant step toward embodied AI that understands and simulates physical rules like motion and object permanence.

DeepMind positions these capabilities as foundational for training AI systems in digital worlds that mimic real-world dynamics, which could accelerate research in robotics, autonomous agents, and other areas where simulated experience helps teach decision-making. It also represents progress toward long-term goals such as artificial general intelligence (AGI), where AI systems can reason about and navigate complex scenarios beyond narrow tasks.

The model builds on earlier versions in the Genie family by extending how long worlds persist, improving visual fidelity, and enabling genuine on-the-fly interactivity without predesigned assets or manual coding. Developers have used Genie 3 to create indoor, outdoor, and game-like settings purely from written descriptions, illustrating both creative and technical use cases for the system.

DeepMind’s announcement frames Genie 3 as a research milestone in world modeling, highlighting its potential for both immersive content creation and as a training ground for future AI agents.